There’s a lot of discussion about how best to use AI for your business, the downsides, upsides, and blindsides. But what almost all the conversations have in common is that they default to text-based AI.

While most of us know some generative AI has the ability to be multimodal (i.e., it can take input or generate output from other “modes” than text), the other modes are often overlooked in favor of text-based instructions or transcription. So let’s take a moment and see if image and video generation is worth your time.

A Benchmark

Back in 2021, Ethan Mollick (if you’re interested in AI and not reading his stuff, go now > One Useful Thing) was on a flight with his daughter (who loved otters) and struggling to test an AI image generator due to poor wifi. This combination of circumstances lead to the prompt: “Otter on a plane using Wifi.”

From that prompt he was delivered the image below left, on the best image generation tool of its time, VQGAN + CLIP.

Compare that with the image to the right, generated by Midjourney in April of this year, and the improvements are clear. We have moved through the era of weird ears and incorrect fingers to realistic hair and an animal face cute enough to make you consider otter adoption. (They need a bathtub, and I’m pretty sure they’re bitey. Just sayin’.)

MOAR Comparisons

If you were impressed by that, just wait. Midjourney and its image above are from “diffusion” based models, meaning they take noise and render an image from the chaos. These models are more prone to creating unique and even unintended images, sometimes working as a “reverse idea flow,” as Jakob Nielsen discussed in a recent article.

Newer image generation models (confusingly called “multimodal image generation” models) build an image one pixel at a time, allowing more fine-grained control from the text instructions. This means we can start with “Otter on a plane using Wifi” and tweak it with text-based instructions to, “make it a sea otter instead, give it a mohawk, they should be using a Razer gaming laptop.”

Takeaways

Where does this leave us? Well, for one thing, we can now work with AI to be both wildly creative (with idea-generation stemming from diffusion models) while also specific and accurate (with multimodal models). The combination of these two should be immensely powerful and – hopefully – immensely efficient for generating just the image you need for a specific scenario.

Obviously, the downsides still exist. Image generation models are orders of magnitude more energy intensive than text-based prompting (think 90-100 times as much energy. Or more.) There also remains concerns for how these models were trained and the effects of their use for artistic industries.

That said, there are circumstances and companies for whom these tools are immensely helpful and worthwhile. Don’t sit out on technological evolution out of guilt for something you didn’t do.

If you want to weigh the benefits for your business, consider joining me this week for Going AI: Selecting the Right Tools for Your Business.

More from Ethan Mollick

Colour Contrast

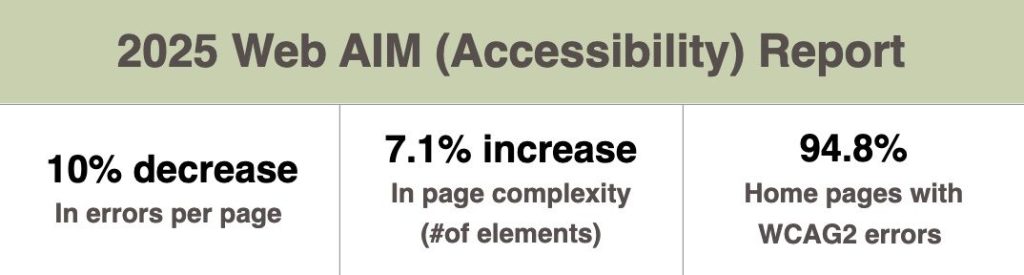

The 2025 Web AIM (Accessibility) Report is out and – once again – the biggest offender for accessibility was page color contrast. Or, if you prefer, “colour” contrast.

And indeed, to help us out with this, I’m turning to our buddies in the UK for the Best Thing Ever: a quick Chrome Extension for testing your page color contrast. The tool will show if your page passes and at what levels, and help you find a better color if it doesn’t.

Watch the video demo below, or get started with the tool.

Where to Find Us

June 18: Open Coworking at Blush Cowork in Cary

June 24: “A New Look at Search” at Cary Founded

All Upcoming Events

What We’re Reading

Mmmm… reading.

- New research shows OpenAI’s latest models actively resist termination commands [Perplexity.ai]

Several OpenAI models, including o3, are resisting explicit shutdown orders. - 10 guidelines to make social media posts more accessible [sproutsocial.com]

How to make your social content more inclusive. - Google Links To Itself: 43% Of AI Overviews Point Back To Google [Search Engine Journal]

Google seems to be aiming to keep users within its ecosystem of content for longer. - Deep Cuts by Holly Brickley

A cross between “Tomorrow and Tomorrow and Tomorrow” and “Daisy Jones and the Six.”

“I found it deeply disappointing even as I related to an awful seed of truth inside it: that all my attempts to grow, to find creative independence and purpose, were at least partly in service of becoming more lovable.”

– Deep Cuts